CLI Solved This Problem 50 Years Ago. MCP Still Has Not.

You connected 8 MCP servers to your AI agent. You have not typed a word yet. Thirty-three percent of your context window is already gone.

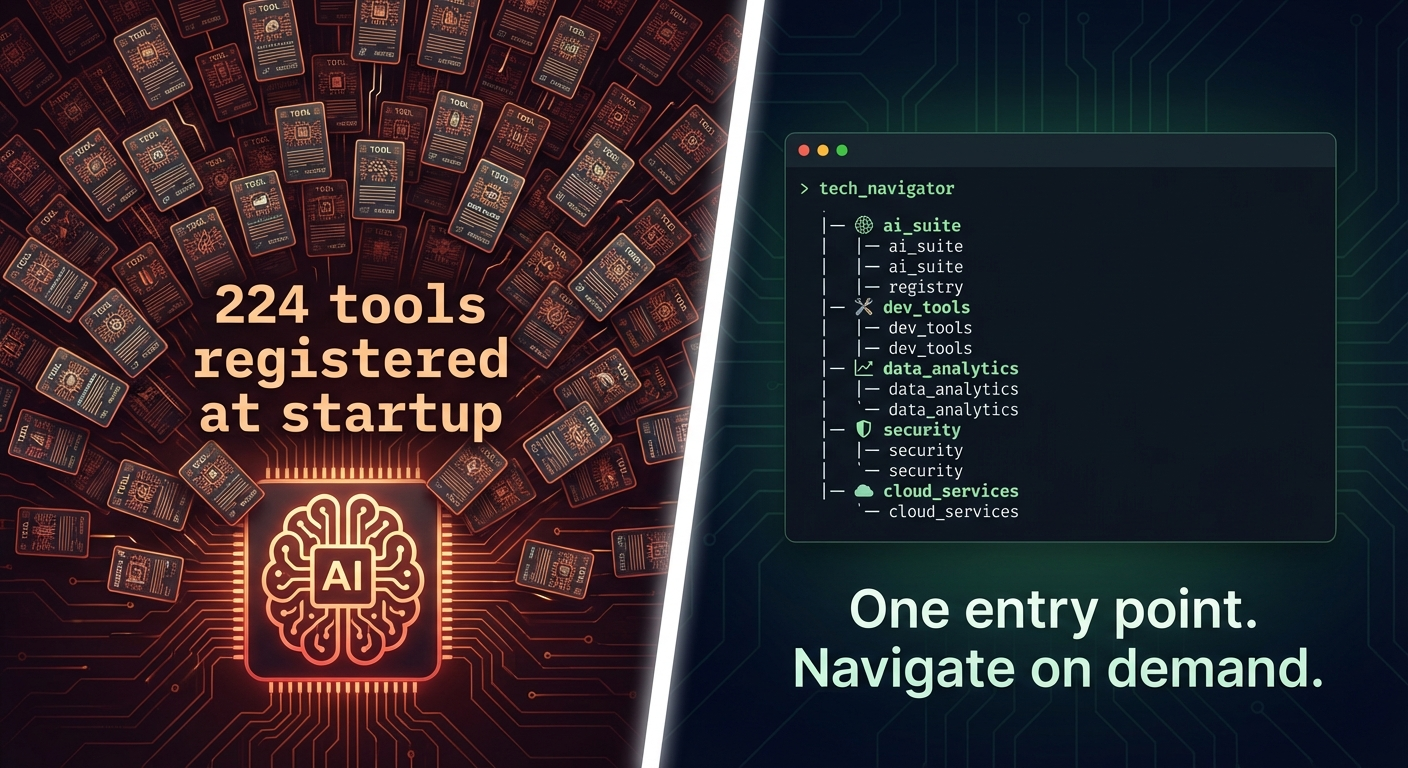

That is not a hypothetical. A developer running 8 production MCP servers measured it: 224 tools, each with a name, a description, and an input schema, all registered at startup and dumped wholesale into the model context. At roughly 295 tokens per tool schema, that is 66,000 tokens consumed before the first message. Claude Sonnet has a 200,000-token context window. You have already spent a third of it on tool definitions the model may never use.

This is the MCP context bloat problem. It is real, it is getting worse, and the community has been arguing about how to fix it for six months without landing on an answer.

The answer, it turns out, has been sitting in your terminal since 1971.

How Bad Does It Get?

The 8-server example is a moderate setup. Power users run more.

I maintain tplink-omada-mcp, one of the most complete MCP implementations for a network controller. At issue #69, users started hitting performance and quality problems. At that point, the server had 198 implemented tools covering 29% of the available API. Not a complete implementation. The token cost: roughly 19,900 tokens. On a GPT-4o session with a 128K context window, that is 16% gone before a single character of conversation.

The more complete you make your MCP server, the worse it gets.

MCP current behavior is all-or-nothing. Every tool from every connected server registers at startup. No lazy loading. No filtering. No grouping. The model receives the full catalog whether it needs one tool or all of them.

Developers building comprehensive MCP servers are being penalized for being thorough.

What the Community Tried

The MCP community recognised this problem. Several approaches emerged.

Primitive Groups is the official spec proposal. Discussion #1567 on the modelcontextprotocol repo has collected over 100 comments since September 2025. The proposal introduces namespaced groupings for tools, resources, and prompts organized into categories. Clients could selectively load groups relevant to the current task.

It sounds like the right idea. The problem: after six months and 100 comments, the discussion is stuck on how to serialize group membership efficiently. Three approaches have been debated, each with unacceptable tradeoffs. More critically, even if adopted, Primitive Groups does not reduce context on its own. It provides structure and labels. Filtering still requires client-side implementation, which is out of scope for the spec. The proposal solves organization, not bloat.

smartmcp takes a different approach. It is a production-usable proxy that sits between your AI client and your MCP servers. On startup, it indexes all upstream tool schemas using FAISS vector embeddings. The model sees exactly one tool: search_tools. The model calls it with a natural language query, smartmcp runs a semantic search, and returns the top matching schemas. 224 tools become 6 in context. The measured reduction: 97%.

It works. The cost: FAISS, Python, sentence-transformer dependencies, a startup indexing phase, and an extra round trip per tool discovery. It also struggles when the agent wants to browse capabilities it does not know exist. You can only search for what you can describe.

Both approaches reach for complexity. One adds protocol infrastructure. The other adds ML infrastructure. Neither asks the obvious question: has this problem been solved before?

What the Terminal Got Right

Open a terminal. Type git --help.

Git does not print its entire manual. It prints categories: start a working area, work on the current change, examine the history, and so on. You navigate from there. git commit --help gives you the specifics for commit. The context grows only as deep as you choose to explore.

This is hierarchical, on-demand discovery. It is the design pattern every CLI tool worth using has followed for decades. It works because it respects the fundamental constraint of the interface: limited, precious output space. You do not front-load everything. You give the user an entry point and let them navigate.

An LLM context window is the same constraint. It is finite, shared with everything else in the conversation, and degrades in quality as it fills. Front-loading 224 tool schemas is the equivalent of dumping a 600-page manual into the terminal on startup. Nobody would design a CLI that way.

The MCP community is building CLIs that do exactly that.

The Framework That Already Solved It (By Accident)

One AI agent framework does not have this problem. Not because it was designed around it, but because of an architectural quirk that nobody documented as a pattern.

OpenClaw accesses MCP servers through a CLI proxy called mcporter. The model does not see individual MCP tool schemas. It sees mcporter as a single tool with subcommands: mcporter list, mcporter call server.tool, mcporter list server --schema. Tool definitions never enter the context unless the agent explicitly requests them.

The result is O(1) context cost regardless of how many MCP tools exist upstream. One entry point. Drill down on demand. Exactly the git pattern.

This happened by accident. mcporter exists for authentication, configuration management, and type generation. Nobody designed it to solve context bloat. The architecture just landed in the right place.

The contrast with other systems:

| System | MCP Integration | Context Cost |

|---|---|---|

| Claude Desktop / Cursor | Native, all tools at startup | Scales with every tool added |

| nanobot | Native, auto-discovered | Same problem |

| smartmcp | Semantic proxy | O(1) at inference, O(n) at startup for indexing |

| OpenClaw | CLI proxy via mcporter | O(1), single entry point, schemas on demand |

OpenClaw did not formalize this as a design principle. Nobody wrote it down. But it works, it is provably better, and it requires nothing but a proxy and a naming convention.

What MCP Should Formalize

The spec change is simple. MCP servers should be able to declare a lazy discovery mode.

In lazy mode, a server exposes one tool: list_categories(). The model calls it, gets back a list of capability groups with brief descriptions. It calls describe_category("network") and receives the 8 tool schemas relevant to networking. It picks the one it needs and calls it. Context stays minimal until the agent deliberately expands it.

No embedding infrastructure. No FAISS. No startup indexing. No extra dependencies. Just a protocol convention that every MCP server can implement in an afternoon.

The client benefits immediately. The model benefits immediately. Tool count scales without context penalty. A 650-tool server costs the same as a 10-tool server until the agent decides to explore.

The pattern is validated by 50 years of CLI design and by at least one production agent framework running it right now. It just needs to be written down.

What This Means If You Are Building MCP Servers

Stop thinking about your MCP server as a flat list of tools. Start thinking about it as a CLI.

Your server has categories. Network tools. Firewall tools. Device management tools. Those categories should be discoverable before the schemas are. A well-designed server exposes its shape before it exposes its details.

The category filter approach — environment variables that limit which tool groups register at startup — is a step in the right direction. It is what Omada MCP shipped in issue #69. It cuts context by 62-85% depending on the profile. But it is static: a human configures it once, and the model cannot adapt at runtime. It is a workaround, not a solution.

The real solution is dynamic. The model discovers what it needs, when it needs it. Context grows with purpose.

The MCP community has been arguing about how to add this to the protocol for six months. Meanwhile, the design pattern has been sitting in every terminal on earth since before most of the people arguing were born.

The Takeaway

Context windows are not free. Every token spent on a tool schema the model never uses is a token that could have held conversation, reasoning, or retrieved knowledge.

MCP all-at-startup registration model made sense when servers were small and setups were simple. Neither of those is true anymore. Servers are growing. Users are connecting more of them. The cost compounds with every tool added.

The fix is not complex. CLI tools solved the exact same constraint — limited, precious interface space — with hierarchical discovery. Give the model an entry point. Let it navigate. Charge it only for what it actually explores.

Fifty years of tooling design figured this out. MCP just needs to catch up.

Share this