The Karpathy Loop Beyond ML: How Any Business Can Run 36,500 Experiments a Year

Most companies run about 30 experiments a year. They call it being data-driven.

The next generation of companies will run 36,500.

Not because they hired better marketers or smarter product managers. Because they built a loop.

Andrej Karpathy released autoresearch on March 8, 2026 and the AI community immediately understood it as a machine learning tool. What most people missed: the framework is not about ML at all. It is about something much more fundamental: iteration velocity as a structural business advantage. AI is just what finally makes the loop fast enough to matter.

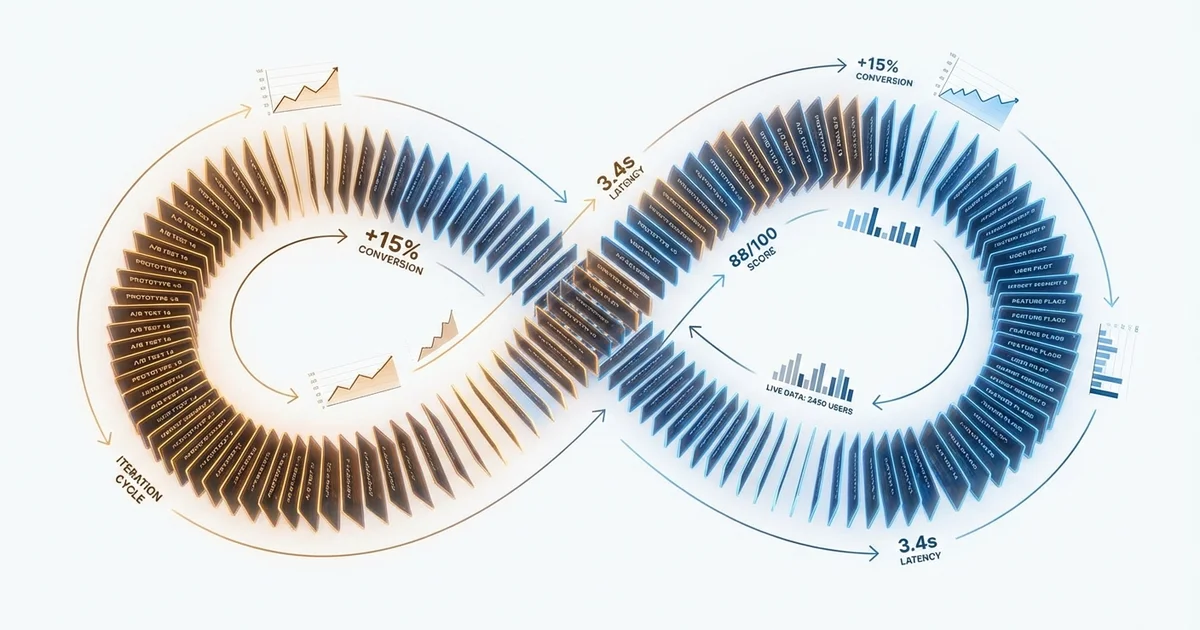

The requirements are three. An automated experiment the agent can run without human intervention. A measurable, objective metric, not vibes, actual numbers. And a version control mechanism to revert failed experiments cleanly. If you have all three, you have an autoresearch system. It does not matter whether the experiment is training a language model or testing a subject line.

The loop is the same. The compounding is the same. The speed advantage is the same.

30 Experiments a Year vs. 36,500

Eric Siu, founder of digital marketing agency Single Grain, posted a thread within days of autoresearch going viral that became one of the most shared takes of the month:

“Most marketing teams run ~30 experiments a year. Maybe 52 if they’re ‘good’. New landing page. New ad creative. Maybe a subject line test. That’s considered ‘data-driven marketing.’ But the next generation of marketing systems will run 36,500+ experiments per year.”

The math is not complicated. A standard marketing A/B test cycle at most companies: Week 1, someone has an idea. Week 2, creative builds variants. Week 3, the test runs. Week 4, results are analysed at a meeting. Week 5, a decision is made. Five weeks for one iteration.

An agent running the Karpathy Loop does the same cycle in hours, overnight, without anyone in the room.

Replace the ML training script with a marketing asset. Replace val_bpb with conversion rate. The structure is identical. The agent generates a variant, changes one variable (a headline, a CTA, an opening line), deploys to a test segment, measures the outcome, keeps the winner, reverts the loser, and goes again. By morning you do not have one experiment. You have a ranked log of what worked, what failed, and what the agent is trying next. The next version of the asset is already the best version it has found so far.

The most powerful implication is not the speed. It is the history. Every experiment leaves a trace. Over weeks and months, an autoresearch system builds a proprietary map of what resonates with a specific audience: what subject lines get opens, what CTAs convert, what framing triggers action. That map is not replicable by a competitor who has not run those experiments.

Siu: “The companies that win won’t have better marketers. They’ll have faster experiment loops.”

This is a structural advantage, not a talent advantage. You cannot hire your way out of it once you are behind.

Product: No GPU Required

The Karpathy Loop applies to product work anywhere there is an objective metric and an automated test. The interesting thing: no GPU required. The agent modifies prompts, instructions, or product configurations, not model weights.

We covered the mechanics of how autoresearch agents structure their loops in The Machine That Does Its Own Research. The product applications extend that framing to every business with a feedback signal.

Prompt and agent instruction optimisation. You have an AI agent doing a task: writing summaries, qualifying leads, answering support tickets. The agent tests variations of those instructions against an objective quality score. It keeps what improves the score and reverts what does not. Your agent gets measurably better every night without you touching it.

Onboarding flow optimisation. Any SaaS product with an onboarding sequence has a funnel. Each step is a variable. The metric is activation rate. An agent tests variations of copy, sequencing, friction reduction, and runs dozens of iterations overnight against real user behaviour. The human reviews the morning report, not each individual test.

Search and recommendation ranking. Any system with a ranking function is autoresearchable if you have a quality metric. The agent modifies ranking parameters, runs the experiment on a traffic slice, evaluates the metric, keeps or reverts. This is what Google and Facebook have been doing at scale for years. The Karpathy Loop makes it accessible to any team with an engineer and a weekend.

Drug Discovery: 300 Experiments Where You Once Ran 3

The Karpathy Loop maps almost perfectly onto molecular optimisation.

A pharmaceutical researcher iterates on molecular structure, runs assays or simulations, measures binding affinity, toxicity, selectivity, and repeats. A human researcher might run a handful of meaningful iterations per week. An agent running the Karpathy Loop runs hundreds overnight.

The requirements are already present in modern computational chemistry. Automated experiments via molecular simulation (docking, MD simulation, ADMET prediction) are fully automatable. Objective metrics exist: binding affinity scores, IC50, selectivity ratios. Version control for molecular structures is trivial.

Groups like Recursion Pharmaceuticals, Insilico Medicine, and Isomorphic Labs are already running variants of this loop. The commercial opportunity is productising it for mid-sized pharma and biotech that cannot afford bespoke AI infrastructure.

The difference between a research team running 3 experiments per week and one running 300 is not incremental. It is the difference between a 10-year drug development timeline and a 3-year one.

Finance: PnL as val_bpb

A trading strategy is a script. PnL is val_bpb. The agent modifies strategy parameters: entry signals, position sizing, risk rules, rebalancing frequency. It runs a backtest, evaluates performance, keeps improvements, reverts failures. Quantitative finance has been autoresearched by the largest hedge funds for decades. The Karpathy Loop makes it accessible to smaller funds and family offices that previously lacked the engineering depth to build automated research pipelines.

Two constraints make this harder than marketing: regulatory compliance requires documentation and approval for certain automated strategy changes, and the overfitting problem means a strategy that performs in backtest often fails live. Both are solvable with additional guardrails. They do not change the underlying loop.

The same competitive pressure applies here as in marketing. As explored in Prediction Markets Are the Most Interesting AI Story You’re Not Following, AI is already reshaping how fast information moves through financial systems. Automated experiment loops are the next layer.

The New Core Competency

Here is what is not being said loudly enough: the Karpathy Loop is not primarily about AI. It is about iteration velocity becoming a structural business advantage. AI is just what finally makes the loop fast enough to matter.

Every business runs experiments. The question has always been how many you can run and how fast you can learn from them. For most of history, the bottleneck was human time. Someone had to design the variant, set up the test, analyse the results, make a decision. The Karpathy Loop removes humans from the critical path of each iteration. They remain in the loop: setting direction, approving parameters, reviewing significant findings. But they are no longer the rate limiter.

The uncomfortable implication: the skill that matters going forward is not running experiments well. It is designing the loop well.

What is your metric? What is your version control? What constraints do you put on the agent’s search space so it finds signal instead of noise?

Karpathy called this “programming the program.” The businesses that figure this out in 2026 will have a compounding advantage that will be almost impossible to close by 2028. The gap between 30 experiments per year and 36,500 is not just a speed difference. It is a knowledge difference.

And knowledge compounds.

Sources: VentureBeat, Fortune, MindStudio, The New Stack, autoresearch GitHub, Eric Siu / Single Grain.

Share this